Hugo Images Processing

Hugo static website creating is a fantastic tool and I told you before. Since I changed to it, I’m very confident that the site is fast and responsive.

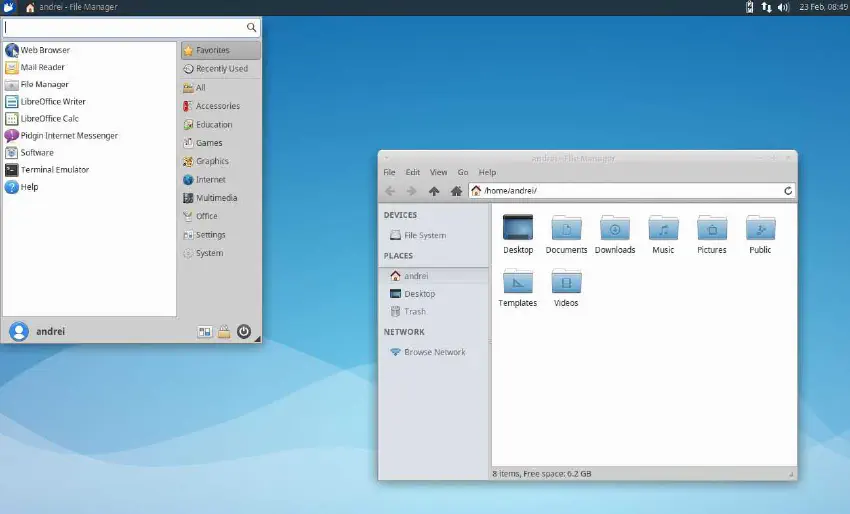

However, my site is packed full of images. Some are personal. Some are huge. Some are PNGs and some are JPGs. I created a gallery component just to handle posts that I want to fill with dozens of them.

Managing posts images is a boring task. For every post, I have to check:

- Dimension

- Compression

- EXIF metadata

- Naming

Dimension

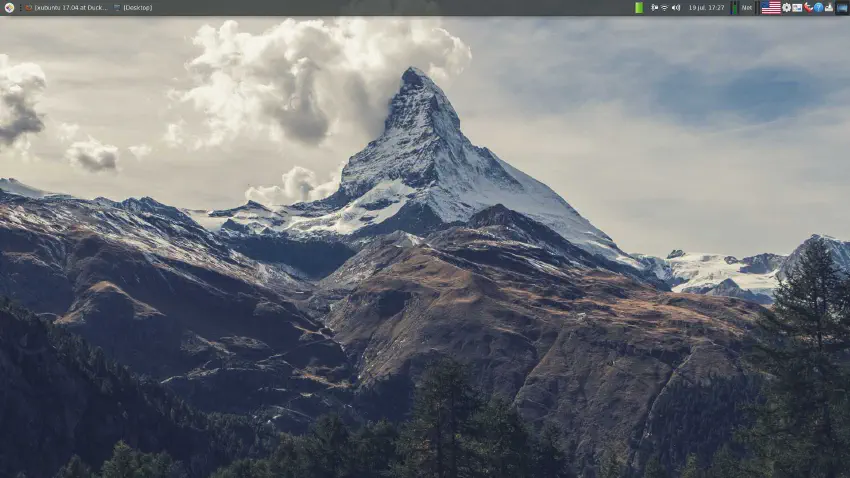

Having a bigger image than the size of the screen is useless. It’s a bigger file to download, consuming bandwidth from both the user and from the server. Google Lighthouse and other site metric evaluators all recommend resizing the images to at most the screen size.

In Hugo, I’ve automated using some functions:

{{ $image_new := ($image.Resize (printf "%dx" $width)) }}

Compression

My personal photos are, most of the time, taken in JPEG. Recently I changed the default compression to HEIC for my phone camera, that provides better compression to hi-resolution photos. The web, however, does not allow such format.

Some pictures used to illustrate the posts are PNG. They have better quality at the expense of being larger. Mostly only illustrations and images with texts are worth to have a lossless format.

Whatever the format, I would like to compress as much as possible to waste less bandwidth. I’m currently inclined to use WebP, because it can really shrink the final size to a considerable amount.

{{ $image_new := ($image.Resize (printf "%dx webp" $width)) }}

EXIF metadata

Each digital image have a lot, and a mean A LOT, of metadata embedded inside the file. Day and time when it was taken, camera type, phone name, even longitude and latitude might also be included by camera app. They all reveal personal information that was supposed to be hidden.

In order to share them in the open public internet, it is important to sanitize all images, stripping then all this information. Hugo do not carry these infos along when it generates new images. So, for all images get a minimal resize, this matter is handled by default.

Naming

I would like to have a well organized image library, and it would be nice to standardize the file names. Using the post title to rename all images would be great, even more if used some caption of user provided description.

However, Hugo does not allow renaming them. To make matters even worse, it appends to each file name a hash code. A simple picture.jpeg suddenly became picture-hue44e96c7fa2d94b6016ae73992e56fa6-80532-850x0-resize-q75-h2_box.webp.

An incomprehensible mess. If you know a better way, let me know.

So What?

So, if most of the routines can be automated, that’s the problem?

The main issue is that Hugo have to pre-process ALL images upfront. As mentioned in the previous post, it can take a considerable amount of time. Especially if converted to a demanding format to compute such as WebP.

Netlify is constantly reaching the time limit to build the site, all because the thousands of image compressions. I am planning to revert some commits that I implemented WebP and rewrite them little by little, allowing Netlify to build a version a cache the results.

There are some categories of images:

- Gallery full-size images: there are hundreds of them, it would take a lot of the processing time, but I will have the metadata extracted from the originals. The advantage is that they are rarely clicked and served.

- Gallery thumbnails: the actual images that are shown on gallery mode. They are accountable of the biggest chunk of the main page overall size when a gallery is in the top 10 latest posts.

- Post images: images that illustrate each article. They are resized to fit the whole page, so when compressed they represent a nice saving.

- Post top banner: some posts have a top image. They are cropped to fit a banner-like size, so they are generally not that big.

I will, in the next couple of hours, try to implement the WebP code on each of these groups. If successfully completed, it will save hundreds of megabytes in the build.

Bonus Tip

Hugo copies all resources (image, PDF, audio, text, etc.) from the content folder to the final public/ build. Even if you only use the resized ones. Not only the build becomes larger, but the images that you wanted to hide the metadata is still online, there. Even if not directly pointed in the HTML.

A tip for those that are working with Hugo with a lot of images processed: use the following code into the content front-matter to instruct Hugo to not include these unused resources in the final build.

cascade:

_build:

publishResources: false

Let’s build.

Edit on 2021-08-25

I discovered that Netlify has a plugin ecosystem. And one of the plugins available is a Hugo caching system. It would speed up drastically the build times, as well the possibility of converting to WebP all images once and for all. I will test this feature right now and post the results later.

Edit on 2021-09-13

The plugin worked! I had to implement it using file configuration instead the easy one-click button. Building time went from 25 minutes to just 2. The current cache size is about 3.7 GB, so totally understandable.

It will allow me to must more frequent updates. Ok, to be frank: it will not restrict the posting frequency. However, patient, inspiration and focus are still the main constrains on blogging.

On netlify.toml file on root, I added:

# Hugo cache resources plugin

# https://github.com/cdeleeuwe/netlify-plugin-hugo-cache-resources#readme

[[plugins]]

package = "netlify-plugin-hugo-cache-resources"

[plugins.inputs]

# If it should show more verbose logs (optional, default = true)

debug = true