Flax Engine

In a year full of personal experimentation in other careers (like running for congress, for example :), I still programmed a lot. I invested a lot of time studying Docker and containers, Kubernetes, Home Assistant, and hosting web systems myself (like Nextcloud).

But game design is a passion.

I constantly get annoyed with Unity3D and the latest events on the business side, which had me worry even more. I tried to use Godot and failed. C# is far better in terms of easy-to-use and safety compared to C++ and even more to custom scripting languages. It’s more organized and well-documented. And I have years of accumulated experience. And Godot-C# integration is buggy, unstable, and full of gotchas. The way they re-implemented C# in the upcoming Godot 4 created so many artifacts to properly work that I got even more frustrated. I could not use Lists<>!

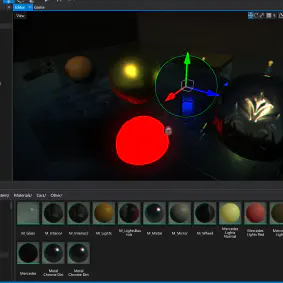

After watching a curious review on the GameFromScratch channel, I tried a new kid in town: Flax Engine. It’s a C++/C# engine heavily inspired by Unity. The from-to process is straightforward. After just a few of weeks playing with it, now I decided to invest in it. I am planning to port my closest-to-finish game I have to it by the end of the year. It’s 1 step back, 2 steps forward.

Cons

👎 Like Godot, there is no way, currently, to drag-and-drop assets and actors with a specific class. I always have to ask for a generic Actor in the editor and check if has the given class in the code. Annoying and error-prone.

👎 Still lacking several common features, compared to Unity and Unreal. It’s evolving and, most importantly, their competitors pave the way for inspired clones like Flax.

👎 Minuscule community compared to other game engines, even the indie ones. Recent GitHub reports the biggest Open Source projects do not place Flax into the top 10.

Neutral

😐 Not FLOSS. It’s open source but it’s not free. The license requires paying royalties. It’s very close to what Unreal asks but more generous. I would love to see it converting to a full FLOSS model in the future.

👎 Old C#/.Net version. A branch with the newest .NET 7 was created and developed. The current version uses .Net Framework 4.8 and it is a pain to install on Linux.

👎 Still lacking Docker image for CI/CD (well, Unity and Unreal also do not have official ones). I may implement a repository myself, inspired by GameCI ;)

Pros

👍 1-1 adaptation from a Unity developer. It’s not as feature-rich, but it’s very competent.

👍 Open community. A lot of issues and Merge Requests on the project’s GitHub page. I’ve been talking to devs in a Discord channel and they are receptive.

👍 Small footprint. The editor is only a couple of megabytes and the “cooked” game is also small. If possible, running it in an Alpine-like image will create a minuscule image to use CI/CD.